Photorealistic and Semantic 3D Scene Representation for Visual SLAM

Semester/Masters Project

This project aims to develop multi-robot SLAM capabilities able to perform in such challenging, real environments, forming the basis of navigation autonomy and coordination of a swarm of drones.

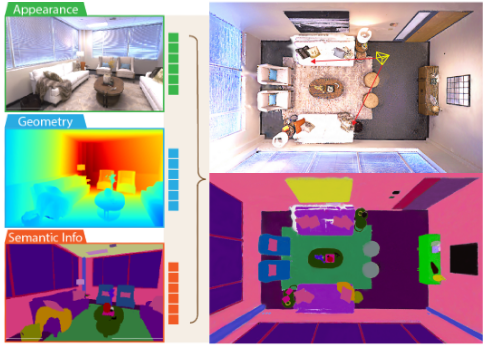

Semantic segmentation in an indoor environment

Semantic segmentation in an indoor environment

Background

The ability of a robot to build a persistent, accurate, and actionable model of its surroundings from sensor data in a timely manner is crucial for autonomous operation. Recently, the integration of radiance field rendering methods into Simultaneous Localization and Mapping (SLAM) has shown promising results, particularly for achieving high-fidelity 3D reconstruction with photorealistic rendering. At the same time, semantic segmentation provides dense and meaningful scene understanding, which is essential for robotics, AR/VR, and autonomous systems.

Description

This project focuses on combining radiance field-based reconstruction with semantic segmentation to enhance 3D scene understanding. The goal is to develop a SLAM pipeline that produces photorealistic and semantically informed 3D representations by integrating semantic cues directly into the training process.

Work Packages

- The work will focus on state-of-the-art radiance field models and semantic segmentation methods, and on evaluating the resulting system across diverse environments.

Requirements

- The students taking this project need to have programming experience with Python and C++