Odometry and Mapping in Dynamic Environments

Semester/Masters Project

The goal of this project is to develop a lidar-inertial odometry approach that tightly integrates dynamic object filtering into the pose estimation and mapping pipeline.

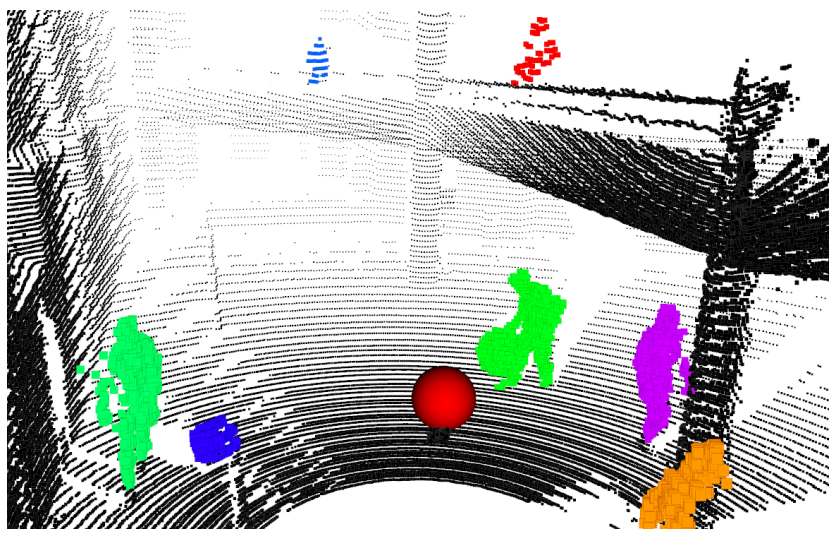

Detecting dynamic objects in a 3D map using Dynablox [2]

Detecting dynamic objects in a 3D map using Dynablox [2]

Background

Existing lidar-inertial odometry approaches (e.g., FAST-LIO2 [1]) are capable of providing sufficiently accurate pose estimation in structured environments to capture high quality 3D maps of static structures in real-time. However, the presence of dynamic objects in an environment can reduce the accuracy of the odometry estimate and produce noisy artifacts in the captured 3D map. Existing approaches to handling dynamic objects [2-4] focus on detecting and filtering them from the captured 3D map but typically operate independently from the odometry pipeline, which means that the dynamic filtering does not improve the pose estimation accuracy.

Description

How do robots navigate busy, ever-changing environments without getting confused by moving people, cars, or objects? In this project, you’ll build a system that fuses LiDAR (3D laser scans) and IMU data to estimate motion and create maps in real time, even when the world around the robot won’t stay still. You’ll dive into point cloud processing, sensor fusion, and dynamic object filtering, developing algorithms that can distinguish what’s static from what’s moving. If you’re interested in autonomous vehicles, drones, or real-world robotics challenges, this is your chance to work on SLAM systems that don’t break in the real world.

Work Packages

- Literature review of work on lidar-inertial odometry and dynamic object detection

- Develop a lidar-inertial odometry approach that can robustly handle dynamic environments

- Evaluate the performance of the approach in comparison with existing work

Requirements

- Experience with C++ and ROS

References

- [1] W. Xu, Y. Cai, D. He, J. Lin, and F. Zhang, “FAST-LIO2: Fast Direct LiDAR-inertial Odometry,” arXiv, 2021.

- [2] L. Schmid, O. Andersson, A. Sulser, P. Pfreundschuh, and R. Siegwart, “Dynablox: Real-Time Detection of Diverse Dynamic Objects in Complex Environments,” IEEE Robotics and Automation Letters, 2023.

- [3] D. Duberg, Q. Zhang, M. Jia, and P. Jensfelt, “DUFOMap: Efficient Dynamic Awareness Mapping,” IEEE Robotics and Automation Letters, 2024.

- [4] H. Lim, S. Hwang, and H. Myung, “ERASOR: Egocentric Ratio of Pseudo Occupancy-based Dynamic Object Removal for Static 3D Point Cloud Map Building,” IEEE Robotics and Automation Letters, 2021.